My old science professor used to say, “Be careful what you test for”. This is as true in a scientific environment as it is in digital analytics. We’re often asked to look and comment on the results of A/B tests and so many times we find the results are invalid due to the way the test has been set up.

Here we offer some simple advice on how you can create fair tests that will mean more meaningful insights in to your optimisation program.

Keep things simple

Try to keep things as simple as possible. If you have complicated rules around browser version or geo location, make sure there is a valid reason for these rules. Sometimes trying to be too cleaver over complicates a test and you’ll spend your time segmenting your results looking for answers that aren’t always there.

Keep things fair

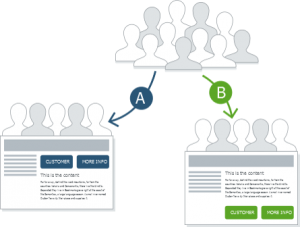

Be aware of traffic sources that may bypass your A/B selection. We’ve worked with clients that have set up large test only to forget that Paid search traffic hasn’t been considered and only enters one arm of the test. This ‘hot’ traffic converts very well and heavily biases the results. Also, if you run an ecommerce site, consider only allowing customers on their first visit to your site into the test. This way you don’t have to worry about giving existing users a mixed experience.

Understand your metrics

Similarly, be mindful of your test success metrics. A client once conducted a test to see if encouraging existing customer to sign in helped sales. The test they set up was perfect but the test results showed encouraging customer to login didn’t increase sales. The reason for this was their key measure of success was existing customer conversion rate (existing customer orders / existing customer visits). With more customers identifying themselves online the number of existing customer visits increased by more than the existing customer order, so conversion actually went down even though more units were sold. Consider 10 / 100 = 10% and 15 / 200 = 7.5%, both orders and visits increased but visits by more than orders.

Don’t rush

Finally, make sure your test has finished before you analyse the results. You don’t need to a mathematician to understand statistical significance. There are loads of websites that you can simply type your results in to and they’ll tell you if the result is genuine or a fluke – e.g. http://www.usereffect.com/split-test-calculator. Some of the more advanced websites will estimate a test basket size for you – don’t be tempted into the ’50 conversions per arm’ theory, it is not right.